You would not run a factory without quality systems, inspection processes, or standard operating procedures.

Most AI deployments lack that discipline.

The model is the engine. An engine without a car does not go anywhere useful. The harness is the car — the engineering infrastructure that connects the model to reliable output. Without it, you have a powerful machine sitting in a shed.

This is the post that defines the harness as a standalone concept, because the term has started to mean too many different things. In this series — and in the operating discipline that makes AI shipping work — the harness is one specific thing: the three-layer infrastructure that turns a probabilistic model into a deterministic system. Specifications. Validation. Orchestration. Each layer prevents a specific failure mode. None of them is optional.

The engine and the car

The factory analogy is not rhetorical. It is the closest available description of what is actually happening inside an organization that ships AI-generated software well. A factory has the same probabilistic substrate every modern AI deployment has — workers (or models) whose output varies between runs, between sessions, between people. What makes a factory ship reliably is not that the workers are perfectly consistent. It is the infrastructure surrounding them. Specifications written before work begins. Quality gates the output passes through before it leaves the floor. A standardized workflow that sequences the steps the same way every shift.

Take any one of those away and the factory does not function. Specifications without quality gates produce hopeful paperwork. Quality gates without specifications have nothing to check against. A workflow without specifications and gates is choreographed chaos — fast, repeatable, and consistently wrong.

The same is true for AI. The model is the worker on the factory floor. It is fluent and fast, and it produces a different result every time it runs. The harness is the rest of the factory. It is what converts that probabilistic worker into a system whose output you can stake a release on.

Most AI deployments today have the engine without the chassis. They have a frontier model with API access, a few prompt templates, and a shared Slack channel where engineers swap what worked for them. The output varies between developers, between sessions, between attempts in the same session. The team's velocity dashboard goes up because lines are getting written. The maintenance backlog also goes up, because nothing about the workflow is repeatable. This is the demo configuration: impressive in isolation, structurally incapable of shipping.

The harness inverts this. With it, the same model produces output that can be reviewed, validated, regenerated, and trusted across teams without coordination overhead growing linearly with headcount. The model did not get smarter. The infrastructure around it got disciplined.

Layer 1: Specifications

Specifications are how you tell the AI what correct looks like before any work begins.

A specification is not a description of what the system should do. It is a versioned engineering artifact with an input contract, an output contract, acceptance criteria, edge cases, and test cases — at sufficient precision that a coding agent can regenerate the implementation deterministically. It lives in source control alongside the code it specifies. It is reviewed as a first-class artifact. When the implementation is wrong, the fix goes into the specification, not the code.

The reason this matters is mechanical, not philosophical. Large language models generate output by predicting tokens within a context window, and the attention mechanism inside the model weights every token in that window when producing the next prediction. A vague request like "write a data validator" puts eight tokens of instruction into the context window and leaves the model's attention to fall on training-data patterns — generic CSV handling, typical validator shapes, standard idioms. The output is the statistical average of code-on-the-internet that mentioned validators. It will not match your team's conventions, error handling patterns, or integration interfaces, because none of those things were ever in the context window to attend to.

A complete specification with type definitions, validation rules, edge cases, and integration interfaces puts the team's actual requirements into the context window as tokens that the attention heads can match against during generation. The same model, given the same task, produces categorically different output — not because the model became more intelligent, but because the conditions for generation became more precise. This is what "specifications as context" means in practice: the spec is not documentation that humans read after the fact. It is the engineered context that determines what the model can attend to during generation.

The discipline that emerges from this is what Module 3 of the manuscript calls the specify-direct-validate loop. Write the spec. Direct the agent against it. Validate the output. When validation fails, the natural instinct is to edit the generated code. Resist it. If the output is wrong, either the specification was ambiguous and the agent interpreted it differently than intended, or the specification was incomplete and the agent filled the gap with a plausible assumption. In both cases the root cause is in the specification. Fixing the code addresses the symptom; fixing the specification addresses the cause — and protects every future regeneration from making the same mistake.

A board-level diagnostic for whether your organization has reached this layer: point a coding agent at one of your "specifications" and ask it to regenerate the component from scratch. If the regeneration drifts substantially from the production implementation, what you have is a requirements summary, not a specification. Real specifications are dense enough that regeneration converges on the existing implementation. Requirements summaries leave the model to guess, and the guesses do not match.

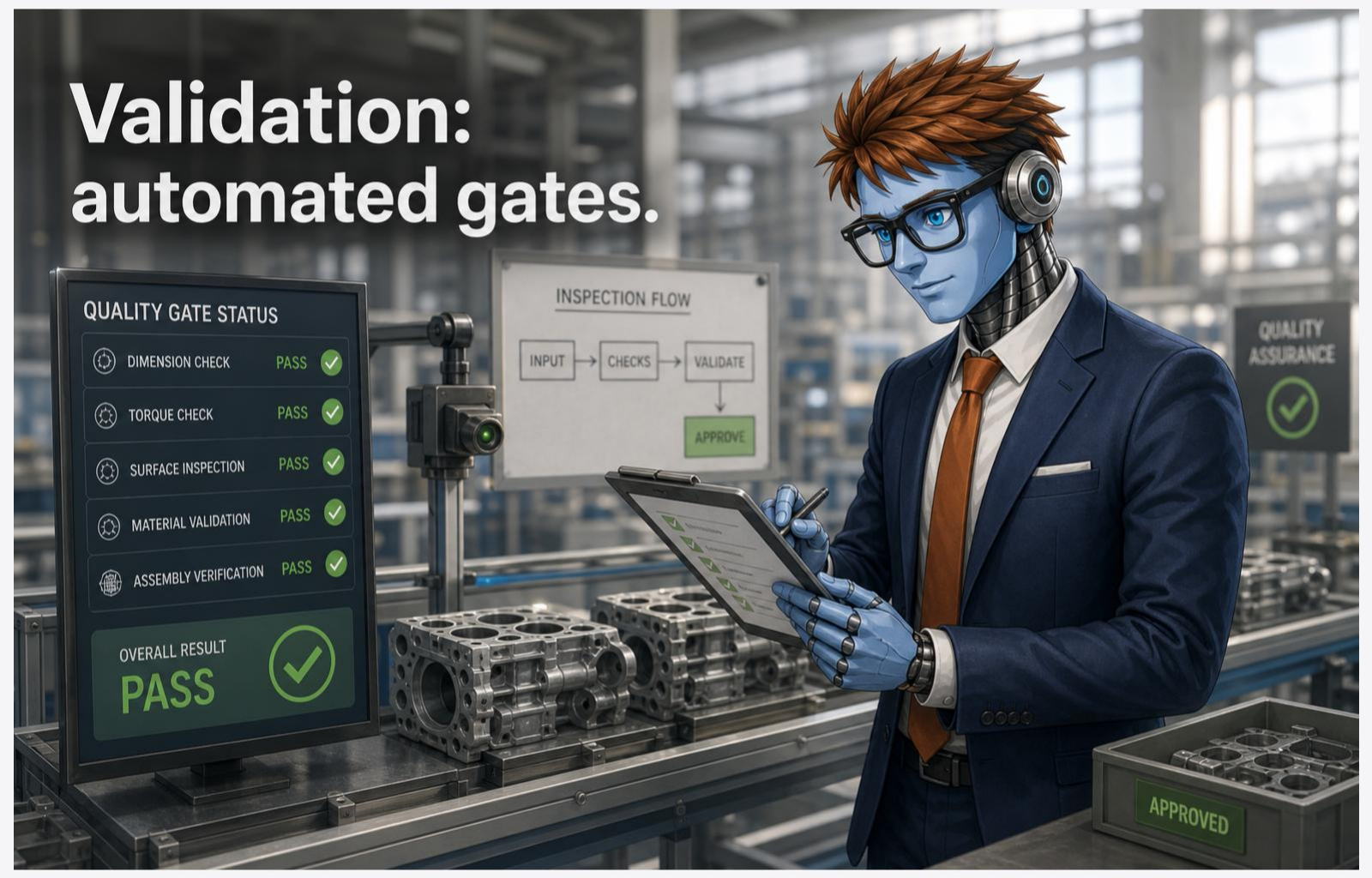

Layer 2: Validation

Validation is what enforces the specification across the lifetime of the code.

Without validation, a specification is a wish. With it, the specification becomes a contract — and contracts that do not get enforced are paperwork. The validation layer is the set of automated gates that decide whether the output is acceptable before it reaches production. Type checkers. Acceptance tests derived from the specification's contract. Schema validators on every structured output. Performance budgets. Security scanners. Tool boundaries that constrain what the agent is allowed to do.

The critical property of the validation layer is that it is deterministic. The model that generates code is probabilistic — the same prompt produces different output across runs, and the variance is irreducible because that is how the model works. The validation layer is the deterministic skeleton that the probabilistic generation passes through before anything reaches production. Every boundary between the model and the rest of the system is a typed tool call with a defined contract: the model proposes, the validation layer disposes.

A handler in the manuscript's framing is the atomic unit of this layer. It receives structured context, performs one operation, and returns a structured result. It does not reach into global state. It does not produce side effects outside its declared interface. It does not decide what runs next. It answers exactly one question: "given this typed input, what is the typed output?" The discipline this enforces is the same one Kent Beck codified in test-driven development — expected behavior is defined before the code, and the code must satisfy it.

The reason handlers must be the atomic unit, rather than the agent's free-form output, is that handlers are testable by construction. You build the input context, call the handler, and assert on the result. No environment to replicate. No globals to mock. No subjective judgment about whether the output is "good enough." The contract is the contract. This is shift-left quality assurance applied to agent output: the typed contract defines expected behavior, and the validation gate decides admissibility before a human looks at the result. What human teams enforce through process — code review, QA, integration testing — your harness enforces through design. The model can be as creative as it likes inside the handler. The boundary around the handler does not negotiate.

A test suite the agent wrote against the agent's own implementation is not a contract. It is a tautology — the agent confirming its own work. A validation pipeline written against the specification is what enforces correctness across the lifetime of the module, and it is what survives every regeneration. When the specification changes, the validation pipeline changes with it. When the implementation changes, the validation pipeline holds the line. Generation is probabilistic. Validation is deterministic. The pipeline is the part that does not drift, and it is the only piece that turns probabilistic output into reliable systems.

The diagnostic at this layer: when AI-generated code reaches production, what gates did it pass? If the answer is "code review by a senior engineer," the validation layer is human attention — which is expensive, scarce, and gets tired. If the answer is "an automated pipeline with type checks, schema validation, acceptance tests derived from the spec, and security scanners that all run before review," the validation layer is engineered. The first configuration scales linearly with headcount. The second scales with the harness.

Layer 3: Orchestration

Orchestration is how the workflow gets executed the same way every time.

A specification tells the agent what correct looks like. A validation gate decides whether the output meets the standard. Orchestration is what sequences specifications, agents, and validation gates into a workflow that produces consistent end-to-end execution across runs, across teams, and across the personnel changes that would otherwise erase the workflow's institutional memory.

The instinct, when connecting multiple operations, is to write a script: read the file, then analyze it, then produce the report. Line 1 calls the reader. Line 2 calls the analyzer. Line 3 calls the reporter. Then a condition gets added — skip analysis if the file is empty. Then a parallel branch — run two analyzers concurrently. Then error recovery — if analysis fails, log the error and continue. Each addition nests another if/else, spawns another thread, wraps another try/catch, until the control flow buries the actual work. At twenty operations, the script is unmaintainable. At fifty, no one can trace the execution path by reading it.

The discipline that replaces the script is the workflow graph — a directed acyclic graph where nodes declare what happens and edges declare when. The graph is itself a specification, this time for execution rather than for behavior. The orchestration engine reads the graph, determines execution order, evaluates edge conditions against deterministic values, and executes each node. The developer does not write branching logic. The developer defines the graph, and the engine interprets it.

This separation is what makes the orchestration layer reliable. The handler inside each node may be creative, exploratory, even unpredictable — an agent generating code will produce different output each time. But the workflow routes based on deterministic, tool-produced values, not on the model's opinion. The graph is the deterministic skeleton around probabilistic nodes. Routing logic lives apart from generation logic, operating entirely in the deterministic domain. That is why a thirty-node workflow is no harder to reason about than a three-node workflow, and why imperative orchestration scripts collapse at the same scale.

The orchestration layer also produces something that imperative orchestration cannot: a structured execution history. Every step records its inputs, its outputs, its tool calls, its state mutations, and the timestamp at which each happened. When something breaks at 3 AM, the response is not "read the code and try to reconstruct what the original developer was thinking." It is "read the spec, replay the validation pipeline against the recorded outputs, and locate the gap." Maintenance becomes a structured operation instead of an archaeological one.

The diagnostic at this layer: when the same multi-step task runs twice, do the two runs produce the same artifacts in the same sequence? In a Stage 1 organization, the answer is no — each run is a fresh chat session with whatever prompt the engineer happened to write. In a harnessed organization, the answer is yes, because the workflow graph is the source of truth for execution and the engine runs it the same way every time.

Why all three layers, and not any one

Each layer prevents a specific failure mode, and each one is necessary because the other two cannot prevent that mode on their own.

Specifications without validation produce hopeful documentation. The spec describes what correct looks like, but nothing in the system enforces it. The agent generates code, the code might satisfy the spec or it might not, and there is no automated way to tell. A senior engineer eventually notices the drift and patches the implementation, the patch becomes tribal knowledge, and the spec drifts further. Within a quarter, the spec and the code disagree, and the spec is now lying — which is worse than no spec at all, because it gives leadership false confidence that the discipline is in place.

Validation without specifications has nothing to check against. The validation gates exist, but they were derived from the agent's own output, which means the gates pass anything the agent produces. A test suite written by the agent for its own implementation is a tautology, not a contract. The validation layer cannot do its job because the standard it is supposed to enforce was never written down independently of the implementation. The gates run, the gates pass, the production code is wrong anyway.

Orchestration without specifications and validation is just choreographed chaos. The workflow runs the same way every time, but every run produces unreliable output through a sequence of probabilistic agents, each one operating without a specification to constrain its behavior or a validation gate to enforce the result. The execution is repeatable. The output is not. This is the configuration that produces velocity dashboards that look good for a quarter and crisis dashboards that look terrible for the four that follow, because the surface area of unreviewed code is now growing predictably and at scale.

The three layers are not three independent investments. They are a single system property — reliability — decomposed into the three places where it has to be enforced. Take any one away and the property does not exist. The question for leadership is not which of the three to start with. The question is whether all three are present, integrated, and enforced.

The leadership move

Most teams invest in the model and skip the infrastructure around it. The procurement spend is visible — a budget line, a vendor relationship, a slide for the board. The harness investment is invisible. It is the engineering hours spent writing specifications that did not exist before, building validation pipelines for outputs that previously went unchecked, and replacing imperative orchestration scripts with declarative graphs. None of those line items show up on a tool-adoption dashboard. All of them are what determines whether AI changes the economics of the work or just adds a new vendor invoice.

The organizations shipping reliably invest in the harness first — because the model without the harness produces demos that fail in production, and the next model upgrade will not fix that. The next-generation model produces better output inside a harness because the harness gives it more useful context to attend to. It produces marginally better demos outside one. The leverage point for AI economics is not the model. It is the engineering infrastructure that determines whether the model's output can be trusted, regenerated, and shipped.

The model is available to everyone. The harness is the leadership decision.

Harness, not hope.

Are you still investing in the engine without building the car?