Your job posting says "AI experience preferred."

That is the wrong skills gap.

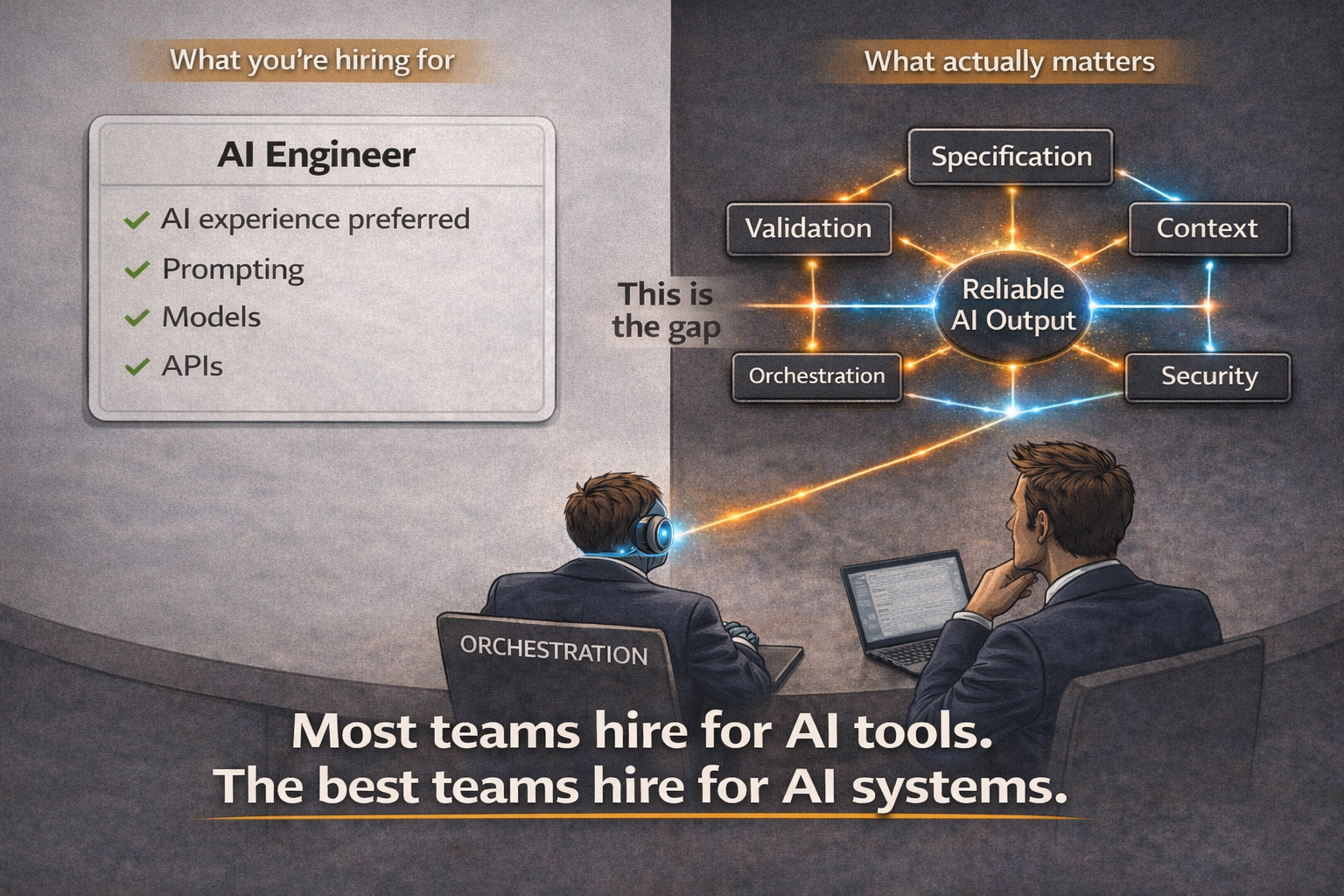

Most teams think they're hiring for AI. They're not. The scarcest capability in engineering right now is not prompt writing, model tuning, or tool familiarity. It is the ability to design systems that make AI output reliable.

Why the gap is in the wrong place

"AI experience" as a hiring signal is a relic of the old scarcity model. When the bottleneck was implementation capacity, familiarity with a new tool was a reasonable proxy for productivity — if you knew the tool, you could type code faster with it. That calculus doesn't hold when a coding agent can generate a thousand lines of correct implementation from a well-written specification.

The bottleneck has moved. It is no longer "can this person use the model?" It is "can this person design a system around the model such that its output is reliable enough to ship?"

That is a different skillset. It does not show up on any "years of Python" line. It shows up in whether the candidate can tell you, in operational detail, how they would make a probabilistic generator behave like a deterministic component.

Most teams hire for AI tools. The best teams hire for AI systems.

Five competencies that don't appear on most job descriptions yet

1. Specification design. Requirements precise enough that AI output can be verified.

An Architect-CEO-grade specification defines the input contract, the output contract, the acceptance criteria, three example input-output pairs, and a handful of edge cases — all in a Markdown file in version control. It specifies behavior, not implementation. When the coding agent's output is wrong, the fix is in the specification, not the code. The competency is the ability to write the kind of document a Kent Beck disciple would recognize as a test before the test exists: unambiguous, testable, exhaustive at the boundary. Most candidates have never written one. The ones who have stand out immediately.

2. Validation architecture. Automated checks that catch errors before they ship.

A probabilistic generator becomes a deterministic component only when deterministic tools enforce the spec. Validation architecture is the discipline of designing the pipeline of tests, type checks, linters, security scans, performance profiles, and guard conditions that sit between agent output and production. It is not about writing more tests. It is about deciding which invariants must hold, which checks catch which classes of failure, and what the gate-fail policy is when a check rejects a diff. Engineers with this skill ship AI-generated code with lower defect rates than hand-written code. Engineers without it ship demos.

3. Context engineering. Structuring memory, constraints, and inputs for consistency.

The attention mechanism inside the model assigns weight by relevance. What reaches the context window determines what gets attended to, and therefore what the output looks like. Context engineering is the discipline of deciding what to include, what to exclude, and how to structure what remains — building typed state, memory fragments, structured outputs from prior steps, and documentation files that the model treats as first-class context. The candidate who can reason about context as a designed artifact rather than a chat log is the candidate who can make outputs repeatable across sessions.

4. Orchestration design. Coordinating multiple agents into reliable workflows.

Production systems outgrow a single context window. When you split work across agents, you split context across windows, and the coordination between those windows becomes the system. Orchestration design is the skill of building workflow graphs: fan-out nodes that parallelize work, reducers that merge the results, typed messages between handlers, correlation IDs that trace a task end-to-end, lease-based execution that detects crashes and reassigns work. Done well, this is what lets one person direct a fleet of coding agents the way a CEO directs a company. Done badly, it is the reason multi-agent pilots fail.

5. Security and control design. Identifying attack surfaces and building safeguards.

Coding agents take action on systems. They read files, call APIs, write to databases, invoke tools. Every one of those actions is an attack surface — prompt injection, unintended tool use, exfiltration via structured outputs, privilege escalation through inherited context. Security design in an agentic system is not an afterthought; it is the boundary conditions on every tool interface the agent touches. Handler purity, controlled side effects through injected interfaces, least-privilege tool scopes, human-in-the-loop gates at destructive boundaries — these are the primitives. Candidates who can reason about them are rare, and they are the ones you want when the thing your agent can do includes "modify production data."

How to interview for these skills

The interview question that separates the Architect-CEO candidate from the AI-familiar candidate is not "tell me about your favorite model" or "have you used Copilot."

It is: "Walk me through how you would build a feature you've never built before, using coding agents, such that you would feel safe deploying the output on Friday afternoon."

Listen for: Do they start by writing a specification, or by prompting? Do they describe a validation pipeline, or say "I would review the code"? Do they talk about context structure and memory fragments, or about clever prompts? Do they mention fan-out, reducers, correlation IDs, or do they describe "one long conversation"? Do they identify the tool boundaries where the agent could cause damage, or do they assume the model will behave?

The right answers sound like systems engineering, not AI punditry.

What to do this quarter

Rewrite one open engineering requisition around the five competencies. Take out years-of-language. Put in specification design, validation architecture, orchestration design. See what the candidate pool looks like when you stop screening for the old scarcity.

Name the five competencies on your current ladder. If your promotion criteria still reward lines-shipped and PR-review-volume, the ladder is rewarding the wrong work. Add categories for specifications authored, validation pipelines tightened, workflows orchestrated.

Audit your existing senior engineers against the five. The ones already thinking this way are your Architect-CEO candidates. The ones who aren't are not a training problem — they are a role-design problem. Give them the authority and the harness to start operating at that level.

The hiring mistake, and the fix

You don't write code. You architect the system that writes it — and these are the skills that make that system work.

AI changes software economics only when leadership changes what it hires, rewards, and promotes.

Are you still hiring for the old skillset?