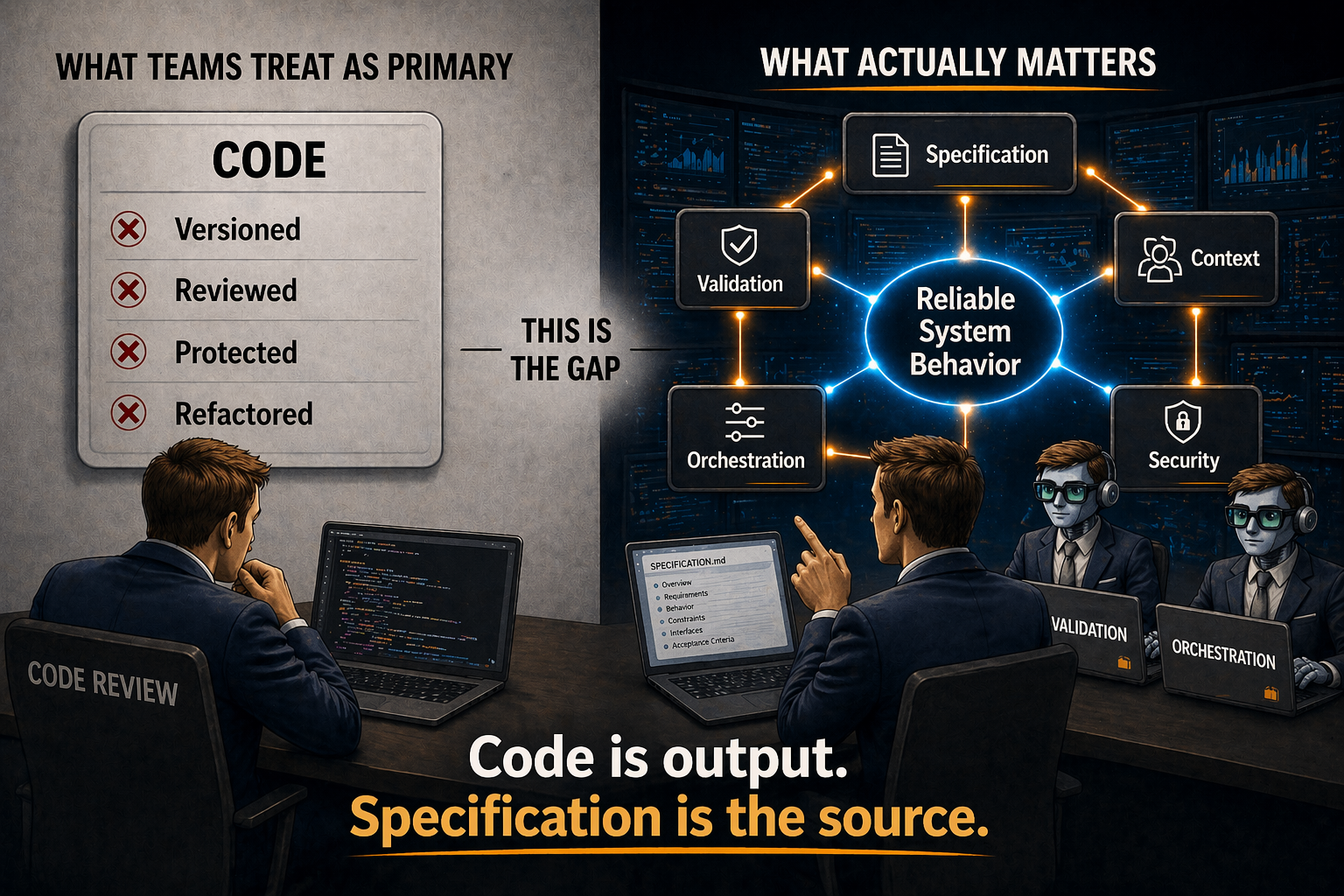

For decades, source code was the artifact that mattered.

It was the thing you versioned, reviewed, protected, and handed off. Everything else — design docs, tickets, whiteboard sketches — decayed the moment it left the room. Code was the one document that stayed in sync with reality because reality was literally compiled from it.

That relationship is inverting.

The artifact shifts

A decade ago, the best teams promoted tests to the same tier as code. Kent Beck's test-driven development made expected behavior a first-class artifact, not a trailing one. The test told you what the code was for. The code was constrained by the test.

Specification-driven development extends that pattern one more level. The specification defines intended behavior. The test suite enforces it. The code implements it — and can be regenerated from the specification at any time.

In engineered AI systems, the specification is the primary artifact. Code is generated output. A derivative, not the source of truth.

Code becomes a derivative

Consider what it means that a coding agent can regenerate a whole codebase from a specification.

It means the specification encodes the thing your organization actually cares about: what the system is supposed to do. Code encodes how one particular agent happened to render that intent on one particular afternoon. Change the language. Change the framework. Change the style guide. Regenerate. The specification survives every migration that used to require a rewrite.

If that sounds like a bold claim, look at what is already shipping. In February 2026, an OpenAI internal case study described a team of three engineers — later expanded to seven — producing roughly one million lines of code in five months without writing a single line by hand. Their job, in their own words, was "harness engineering": design the specifications, encode architectural constraints as automated rules, build validation pipelines, regenerate the code. Throughput increased as the team grew. The specifications were authoritative. The code was the output.

That pattern — specifications as the artifact, code as the rendering — is not a forecast. It is shipping.

What changes when the spec is the source

Treating the specification as the primary artifact has four concrete consequences. None of them are theoretical.

Version control moves upstream. Git history becomes most valuable at the specification level. You still version the code — you have to — but the meaningful diff, the one that tells you what the system is supposed to do differently this week, is in the spec. Code diffs become regeneration noise. A specification diff is a requirements change.

Code review moves upstream. Reviewing generated code, line by line, is an increasingly strange activity. The interesting review question is whether the specification describes the system you intend to operate. Did you state the edge cases? Did you constrain behavior without constraining mechanism? A good specification review prevents a hundred hours of downstream rework. A line-by-line review of agent-generated code catches what a compiler would have caught.

IP protection centers on design, not rendering. For decades, a company's valuable software assets lived in its source tree. Now they live in the specifications that describe how the system should behave and in the validation harness that enforces those specifications. The rendering is cheap. The specification is the accumulated judgment of every engineer who ever thought hard about the system.

Requirements change becomes regeneration, not refactoring. In the old model, a requirements change meant code archaeology. Locate every place the old behavior was encoded. Change each one. Hope you found them all. In the new model, you update the specification, regenerate, and let the validation harness catch the mismatches. The old skill was tracing a requirement through a mature codebase. The new skill is writing a specification precise enough that the regeneration is boring.

The spec is text. What makes it reliable is tools.

An obvious objection: specifications are just words. Words are not software. A coding agent's interpretation of those words is probabilistic. How does that produce reliable systems?

The answer is that the specification is never the whole story. What makes the system reliable is the pairing of a precise specification with a deterministic validation harness — test suites that return pass/fail, type checkers that accept or reject, linters that flag or approve, guard conditions that decide whether output reaches production. The specification defines intent. The tools enforce it. Every boundary between components is a deterministic check with a typed contract.

This is the pattern that runs through every engineered AI system that actually works: probabilistic generation bounded by deterministic verification. The specification is not magically reliable on its own. It is reliable because the harness around it turns probabilistic output into deterministic outcomes.

Teams that skip the harness and ship from vibes are not doing spec-driven development. They are doing vibe coding with extra steps.

This is not about writing less code

The move from code-as-artifact to spec-as-artifact is not a productivity hack — not "AI types faster than humans." It is a reorganization of where engineering judgment gets applied.

Under the old model, the best engineers spent their time writing the most important code. Under the new model, the best engineers spend their time writing the specifications the code is generated from, the validation harness the code has to survive, and the architectural constraints that keep the system coherent across generations. The code still gets written. It just gets written by the agent, under close supervision, from a document that represents the team's actual intent.

Fix the spec and/or the validation rules, not the code. Specify. Direct. Validate.

The phrase is short. The organizational shift behind it is not.

The leadership move

Ask your team this: what is the most valuable artifact our engineering organization produces?

If the answer is "the code," you are operating under the old model. If the answer is "the specifications that define what the code should do" — and your team can point to where those specifications live, how they are reviewed, and how the validation harness enforces them — you are operating under the new one.

The teams pulling ahead invest their best thinking upstream. Code is downstream.