AI creates a new compliance problem.

It also creates a better answer to that problem.

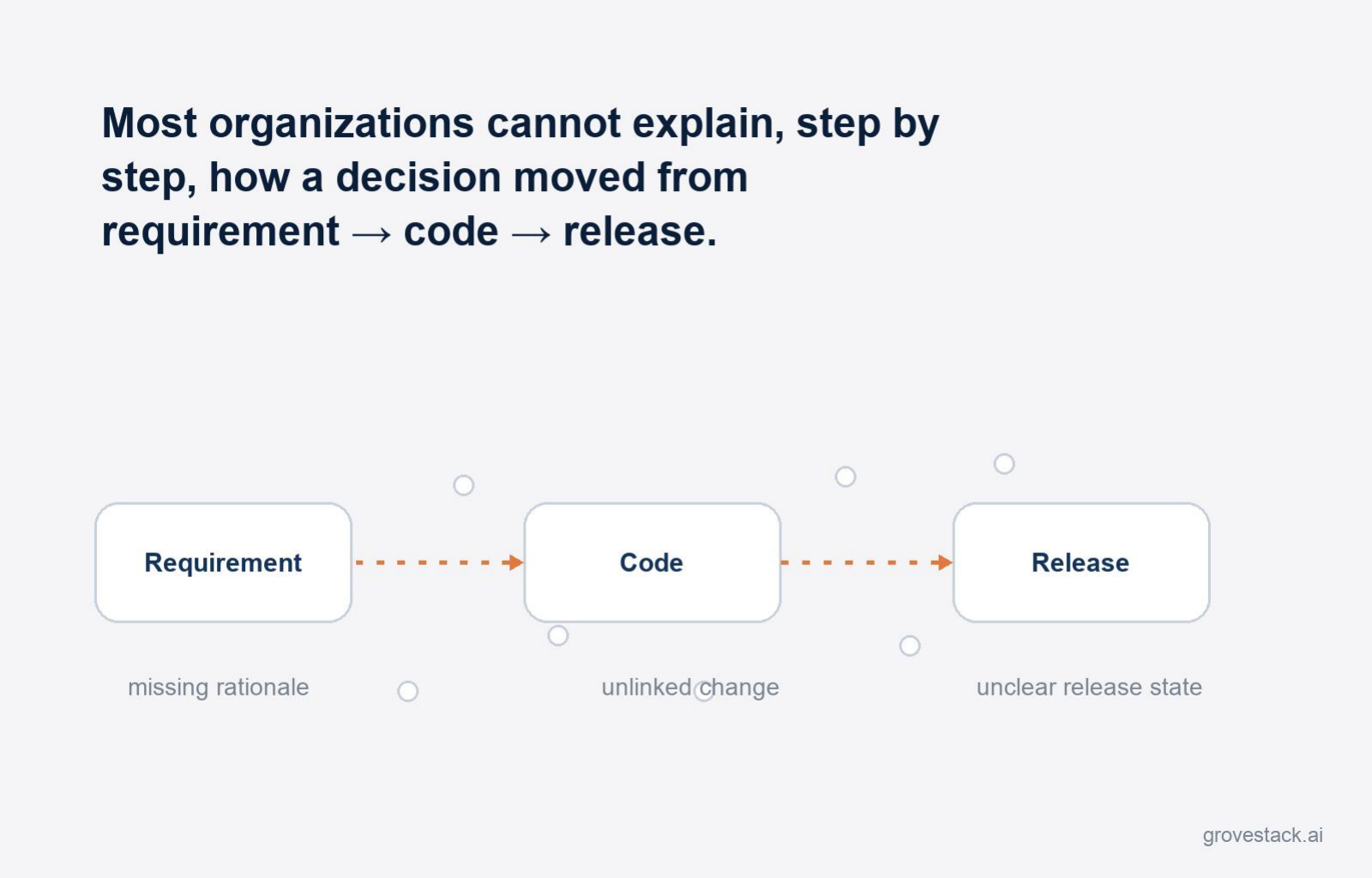

Most software organizations still cannot explain, step by step, how a decision moved from requirement to code to release. That was survivable when work lived in human heads, ticket comments, and scattered reviews. The compliance gap was real, but the regulators tolerated it because everybody had the same gap. You wrote down enough, you reviewed enough, and the audit accepted that the rest lived in the social fabric of the team.

That tolerance breaks down fast once AI starts participating in multi-step pipelines. The agents do not have the social fabric. They produce output without ticket comments. They make decisions without Slack threads. They generate code without pair-programming context. The compliance gap that was survivable for human teams becomes a compliance failure for agent-driven teams, because the regulator does not have an alternative source of truth to fall back on. The team's institutional memory cannot be subpoenaed. The agent's memory does not exist.

Most organizations notice this problem after they have already adopted agents and someone from compliance asks the obvious question: how do we know what the AI did? The teams that have to scramble at that point are the teams that wired agents on top of the existing logging infrastructure and assumed it would be sufficient. It is not. Logging tells you what happened in some places, sometimes. Audit needs to tell you what happened everywhere, always.

The teams that do not have to scramble built provenance into the harness from day one.

Logging is not provenance

The first failure most organizations make is conflating the two. Logging is what application developers have been doing for decades: write a string to a stream when something interesting happens. The string contains what the developer thought to put in it. The stream is searched after the fact when somebody needs to investigate.

Logging is fine for what it does. It is not what audit needs. Logs are sampled. They are unstructured. They are written by the same engineers whose decisions are being investigated. They have gaps. They are usually wrong about what mattered, because the developer did not know what would matter at the time the log line was written. They are almost never sufficient to reconstruct a decision tree end-to-end. They are evidence, not record.

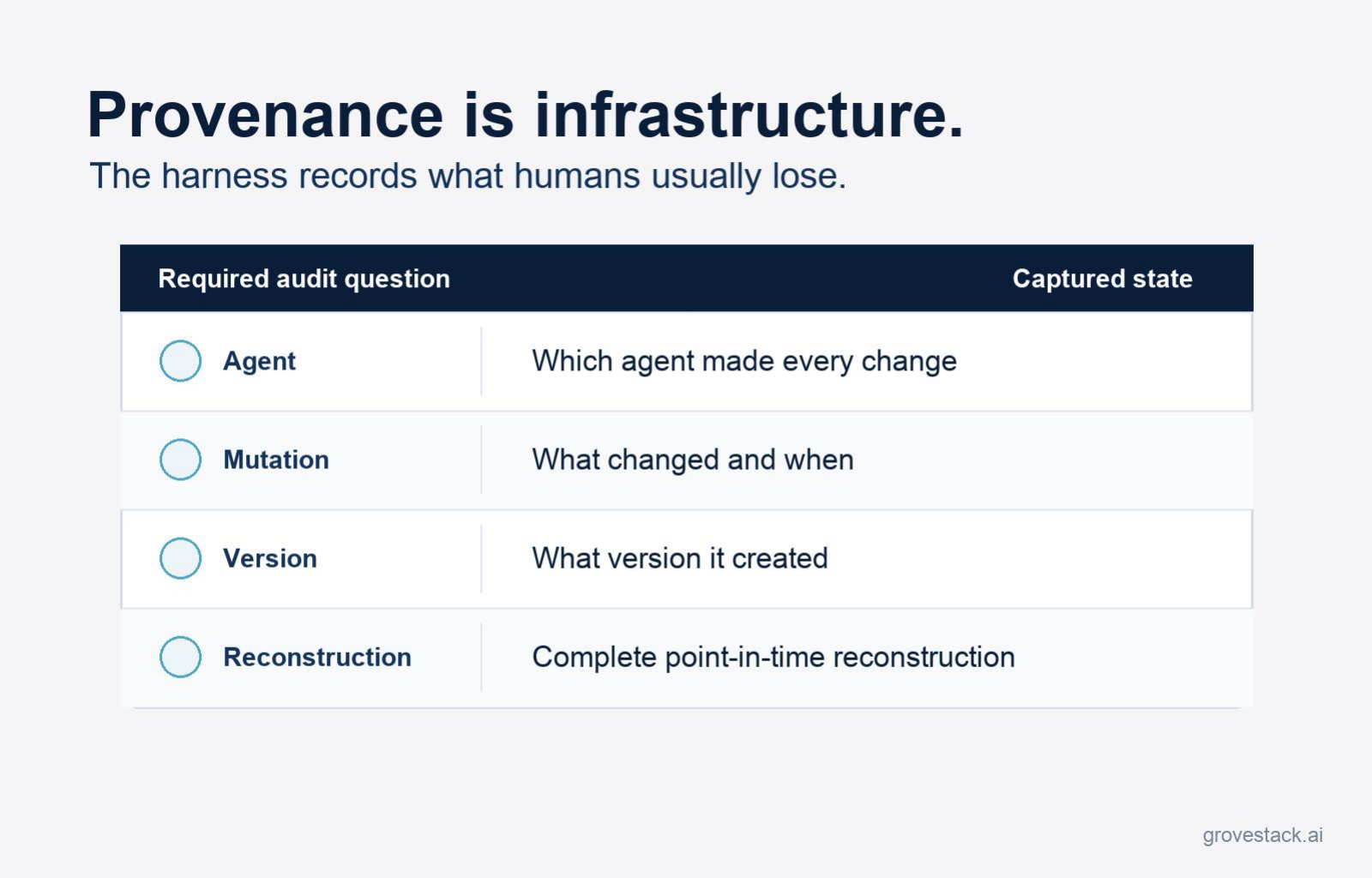

Provenance is something different. Provenance is the structured, complete history of every value the system produced — when it was set, by which actor, with which inputs, against which version of which artifact, leading to which downstream value. Provenance is not added to the system after the fact. It is a property of how the system stores and mutates state. Every mutation creates a new version. Every version is preserved with the source that created it. Every value in the current state can be traced backward through the mutation log to its origin.

The W3C codified this in the PROV Data Model: entities, activities, and agents, with the relationships that bind them — an entity was generated by an activity, an activity was associated with an agent. Production data systems implement the same idea at scale through standards like OpenLineage, which Airflow, Spark, and dbt all support. The pattern is established. The harness applies it to agent workflow state.

The difference between logging and provenance, in audit terms, is the difference between "we have notes" and "we have the record." Notes can be defended in a friendly review. The record stands on its own.

What the harness produces

A well-designed harness treats state as immutable. Every mutation produces a new version. The old value is preserved. The state manager maintains a monotonically increasing version counter, and every mutation records the version at which it occurred. The mutation log is the ordered sequence of those entries, and each entry captures, at minimum: which version, which timestamp, which field, which old value, which new value, and which source produced the change.

Source is the load-bearing column. In a single-actor system, source is one component. In a multi-agent system, source is the agent identity that ran the step that produced the mutation. The audit trail is not "this value changed at 14:32." It is "this value changed at 14:32 because agent X, executing step Y of workflow Z, with input W, produced output V." Every layer of that statement is recoverable from the mutation log alone, without re-running the workflow, without parsing logs, without consulting any human.

Provenance extends the mutation log into a tracing facility. For any value in the current state, you can walk backward: when it was first set, how many times it was modified, which components touched it, and what the intermediate values were. The trace stops at the origin — the first source that wrote the value, with the input that produced it.

Point-in-time reconstruction falls out of this design for free. The mutation log is the source of truth. The current state is a derived view, computed by replaying mutations from the beginning or from a snapshot. Any historical state can be reconstructed by replaying up to a particular version. The harness can answer "what did the system know at 13:15 on Tuesday" without storing a separate snapshot for that moment, because the mutation log already contains what it needs.

For audit, the consequence is that the trail is complete by construction. There is no missing commit message because there is no commit step where a message could be missing. There is no undocumented decision because every mutation carries its source. There is no "ask Steve, he remembers" because the harness remembers, structurally, in a format that does not depend on Steve.

The audit story this changes

The compliance problem has historically been one of weak evidence. The auditor asks how a decision was made. The team produces the artifacts they have: a JIRA ticket, a Slack thread, a code review, a release note. The artifacts mostly add up to the story. The auditor accepts. Everybody knows that the artifacts are after-the-fact reconstructions and that the actual decision was made informally between human actors. The audit is a ritual that confirms the team had reasonable practices.

Once AI participates in the pipeline, that ritual stops working. The auditor asks how a decision was made and the team has to answer for an actor that did not produce a JIRA ticket, did not show up in the Slack thread, and did not write a code review. The artifacts that used to add up no longer cover the surface of what the regulator is asking about. Either the team produces a story that is partially fictional — saying "the engineer reviewed it" when the truth is "the engineer accepted what the agent produced" — or the team admits the gap and the regulator finds the gap unacceptable.

The harness changes this story by changing what the artifact set is. The audit is no longer asking the team to reconstruct a decision tree from scattered evidence. The audit is asking the team to query the mutation log. Which agent produced this output? At which version of which spec? With which inputs? With which intermediate states? Every answer is a structured query against a structured record. The auditor receives a complete, time-ordered, source-attributed history of every value the system produced.

In sectors where the regulator's tolerance for "we have notes" was already low — financial services, healthcare, defense, and increasingly any sector where AI-mediated decisions affect a customer outcome — this is the difference between AI being adoptable and AI being unadoptable. A bank cannot ship an agent that participates in credit decisions if the bank cannot reconstruct, on demand, exactly what the agent did and why. A hospital cannot deploy an agent that touches a clinical pathway without the equivalent. A defense contractor cannot run agents in environments where the audit requirement is structural.

The harness makes those deployments survivable. Without it, they are not.

Why this is also better than what came before

The audit story so far has framed the harness as catching up to a regulatory bar. That framing understates what is happening. In most organizations, manual development practices produced audit trails that were always partial — full of things engineers thought to write down, full of gaps where they did not. The harness does not just match that bar. It exceeds it, because the trail is produced by the system rather than by the engineer's memory and discipline.

A human engineer who fixes a bug at 4:55 on a Friday writes a commit message that is sometimes accurate, sometimes terse, sometimes wrong. The message is whatever the engineer thought to write. The harness, processing the same fix, records the spec version that drove the change, the input the agent received, the output the agent produced, the validation gates the output passed, and the version of every downstream value the change affected. The engineer's commit message is one row in a structured execution history. The history exists whether the engineer wrote anything or not.

Auditability becomes a property of the build, not a discipline of the team. That is a significant inversion. In the old model, audit quality was a function of how careful the team was. Some teams were careful. Most were not. In the new model, audit quality is a function of whether the harness is in place. Teams that built the harness produce the trail. Teams that did not, do not. The variance moves from team-by-team behavior to a structural decision the organization makes once.

For organizations that already have a strong compliance culture, this is upside they did not expect. They were prepared to lose ground when AI entered the pipeline, and they are gaining it instead — because the AI is constrained to operate inside an environment that records everything by design, and the environment turns out to be a stricter discipline than the manual process it replaced.

The diagnostic question

The compliance question is not "can we use AI?" It is "can we prove what the AI did?"

The diagnostic for your own organization is concrete. Pick the most consequential agent operation you currently run. Pick a specific output it produced last week. Without re-running the workflow, can you produce: which agent created it, which spec version was active, which inputs the agent received, which intermediate state values the run produced, and which downstream values are now traceable to it? If you can produce all of that from existing artifacts in less than ten minutes, the harness is doing its job. If you cannot — if the answer requires reconstructing the run, parsing logs, or asking the engineer who set it up — the audit gap is already there, regardless of whether the regulator has noticed yet.

The gap will not stay invisible. Either an internal compliance review will surface it, or an external regulator will, or — more often — a customer will ask a question that requires the answer and the team will be unable to deliver it. The cost of fixing the gap after the question lands is dramatically higher than the cost of having built the harness with provenance from the start.

For financial services, healthcare, and defense, this is not optional infrastructure. It is the prerequisite that makes AI adoption survivable at all. For the sectors where the regulatory bar is rising — and the bar is rising — the prerequisite is becoming universal on a horizon shorter than most strategic plans.

Can you trace every AI decision in your development pipeline?